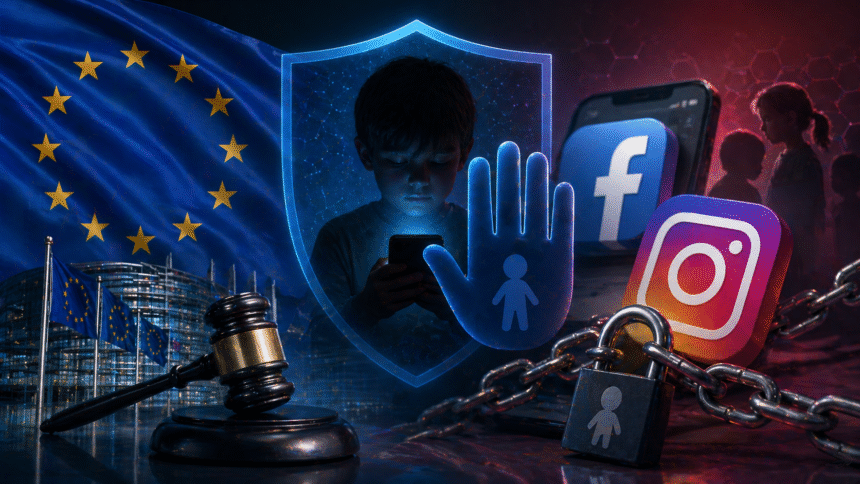

The European Union has escalated its pressure on Meta, accusing Facebook and Instagram of failing to do enough to keep children under the age of 13 off their platforms. The preliminary findings mark another major confrontation between Brussels and one of the world’s largest technology companies, as European regulators tighten enforcement of online safety rules.

The European Commission said Meta may have breached the EU’s Digital Services Act, a law designed to make large online platforms more accountable for the risks they create. According to regulators, Facebook and Instagram’s age-control systems are not strong enough to prevent underage children from creating accounts or staying active on the platforms.

The concern is not only about technical compliance. EU officials argue that children under 13 may be exposed to harmful content, cyberbullying, grooming risks, addictive design features and inappropriate online interactions. For Brussels, simply writing in the terms of service that users must be 13 or older is no longer enough. The Commission wants platforms to prove that they can enforce their own rules in practice.

The investigation found that children could bypass age restrictions by entering false birth dates, while Meta’s tools for detecting and removing underage users were considered insufficient. Reuters reported that EU regulators estimate that around 10% to 12% of children under 13 in Europe may be using Facebook or Instagram, despite the platforms’ age rules.

Meta has rejected the EU’s findings, saying it already invests in technology to identify underage users and protect young people online. The company argues that age verification is an industry-wide challenge, not a problem limited to one platform. However, Brussels appears unconvinced, insisting that major platforms must take stronger responsibility because of their scale and influence.

If the preliminary accusations are confirmed, Meta could face fines of up to 6% of its global annual revenue. The company will now have the opportunity to respond to the Commission’s findings and propose additional corrective measures before a final decision is issued.

The case comes as Europe is moving toward a tougher approach to children’s digital safety. Several European governments have been discussing stricter age limits, stronger parental controls and more reliable age-verification systems for social media. The EU is also working on broader digital identity and verification tools, though these remain controversial because of privacy and security concerns.

For Meta, the case adds to a growing list of regulatory battles in Europe, including disputes over privacy, advertising, data use and platform design. But this investigation may carry greater political weight because it focuses on children — a subject that has strong public support and is difficult for technology companies to dismiss.

The wider message from Brussels is clear: online platforms can no longer rely on weak age checks, vague safety promises or voluntary moderation tools. The EU wants enforceable systems that prevent children from entering spaces they are too young to use.

This case could become a turning point in Europe’s relationship with Big Tech. If the Commission confirms the violation, it may force Meta and other platforms to redesign how they verify age, monitor child safety and handle underage accounts across the continent.